Our publication “Deep learning for digital pathology image analysis: A comprehensive tutorial with selected use cases” , showed how to use deep learning to address many common digital pathology tasks. Since then, many improvements have been made both in the field and in my implementation of them. In this blog post, I re-address the nuclei segmentation use case using the latest and greatest approaches.

Previous Approach

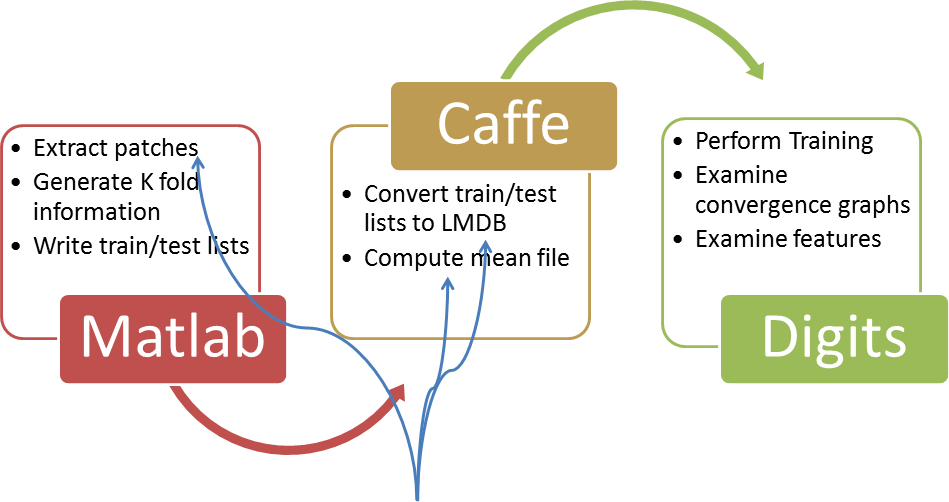

Please refer to the blog post which provides a tutorial of how the nuclei segmentation was performed in the previous version. As shown in the figure above, in that approach, Matlab solely extracted the patches and determined which fold of the evaluation scheme the belonged. Subsequently, the tools provided by caffe were used to (a) put the patches into a database and then (b) compute the mean of the entire database. Overall, we can see that this essentially requires manipulating each patch 3 separate times, the first time to generate, the second time to place it in the DB, and the third time to add its contribution to the overall mean. Clearly this wasn’t optimal, but at the time it was the most straight forward way as it limited the dependencies and leveraged tools which were already written. Additionally, this had another implicit constraint. We were using a ramdisk to hold the patches before converting them to a database because pulling them from disk was incredibly slow, so either we were (a) limited to the size of the ram of the machine or (b) had to accept that time penalty for using the disk.

Revised Approach

In the revised approach shown above, we can see that now we use Matlab to both extract the patches, immediately place them into the database, as well as compute the a mean in line with the patch generation process. Thus, in this implementation, we’re never required to pull the patch from disk, its created in memory, used for all purposes, and then written to disk. In this way, it is also only manipulated a single time. Clearly a more optimized approach, but required additional code and dependencies. In this case, we use not only matcaffe, which is provided by caffe making it straight forward, but also the caffe-extention branch of the matlab-lmdb project which provides a linux only wrapper for matlab to lmdb. Besides this, the remainder of the approach (i.e., how to select patches, etc) remains the same. Additionally, we can now create database of unlimited size, since we’re writing them directly to disk (which is also greatly sped up through the usage of transactions and caching in the LMDB layer).

Details

1. Caffe’s convert_imageset tool is a one shot deal in the sense that it doesn’t allow for the addition to the database after its created, in particular the C++ line:

db->Open(argv[3], db::NEW);

will throw an error if the database already exists. While this was acceptable before since we extracted all the patches ahead of time and “had no move to give”, the ability to add patches later on is now a reality since in Matlab we can specify to append to the database instead of overwriting. Not a huge deal (one could re-compile the code allowing for modification), but it’s a nice benefit of this approach.

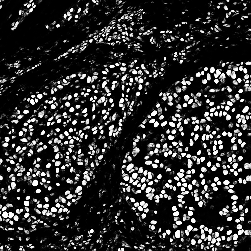

2. This approach has some additional code which generates ”layout” files, which show where the patches were extracted from on the original image. They look something like this, where red pixels and green pixels represent locations of positive and negative patches which were extracted:

In general, since we’re extracting sub-samples from a mask, it’s nice to get a quick high level overview of what is being studied. It also helps when working with students to be able to review the final input to the DL classifier without having to visually examine the patches themselves.

3. The code is fairly well commented, in particular one should review func_extraction_worker_w_rots_lmdb_parfor.m, which is where the actual DB transactions occur.

Modified Network

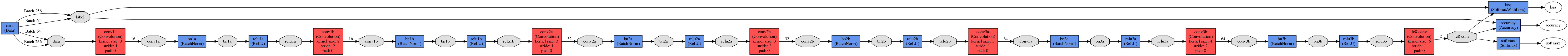

The network we use here is a bit larger (accepts patches of 64 x 64), and contains a few interesting features:

1) The addition of batch normalization layers before the activation layers, for more information see here.

2) Removal of pooling units. We use additional convolutional layers to gently reduce the size of the input at each layer. Conceptually, I like this as it implies that we have the chance to learn an optimal “pooling” function to some extent. Ultimately though, I’ve tried these types of networks (with and without pooling), and have seen very little difference in the overall output.

3) Removal of fully connected layers and replacing them with convolutional layers. This implies that we can now directly use the network (after modifying the deploy file slightly to adjust for input image size) in the efficient DL approach without having to do any network surgery. Notice, as well, there is still no padding on any layers, and in fact each layer size was chosen so that the output of the layer is an integer (usually padding is used to provide this “correction”).

4) we use a VGG style approach in selecting the number of kernels, wherein, as the network gets deeper and the input size shrinks, we increase the number of kernels so that essentially each layer is computed in the same amount of time. This affords us a better ability at predicting how long training and testing will take. This network sports ~100k free parameters, which appears to be overkill for this process.. In removing the “step” increases of kernels, and having a fixed kernel number of 8 at each layer (as opposed to 8, 16, and then 32 on the final layers), we saw little improvement in overall performance though that parameter space was a much smaller 10k.

You can find the updated prototxt here, and the visualization below (click for large version):

Output

Note here that the input patches are 64 x 64, which guarantees a larger receptive field that we need to operate over when doing the interpolation. For example, if we take the output, without any image re-scaling, interpolation, or effort to manage the large receptive field, we obtain a 251 x 251 image, which is “accurate”, but still at a 8:1 pixel ratio, so the boundaries are clunky:

On the other hand, if we do basic interpolation, wherein we basically rescale the output (smaller) image up to the original image size, we see this type of result:

In particular, we can see that it is a bit grainy and “boxy”, as a result of interpolating values between pixels which were actually computed. By computing more versions across the receptor field, ideally one for each pixel (in this case” displace_factor=8″ option in the python output generation file), we can see a much cleaner output, inline with what we’d expect:

As reference, this is the original input (though compressed for easier web load):

Of course this comes with the caveat of requiring additional computation, in particular 98 seconds versus 1.5 seconds for the singular approach. Unsurprisingly, we could have estimated this, as each image in the field takes 1.5 seconds, and now we have 8 * 8 = 64 sub-images for a total of 1.5 seconds / image * 64 images = 96 seconds. The additional time is likely due to the requirement of interpolating the multiple images together.

Future Advancements

One aspect which is left is that the code which writes to the DB doesn’t currently support writing encoded patches. An encoded patch in this case is one which is in a compressed image format such as jpg, png, etc. This means that the caffe datums which are being stored contain uncompressed image patches, resulting in a larger than necessary database size. While matlab-lmdb does support the concept of writing encoded datums, they need to be in a binary format, piped in through a file descriptor. This implies a necessity of writing a patch to disk in the appropriate format (e.g., png), and then opening a file descriptor to this file so that the exact byte stream can be loaded. Ultimately, a ramdisk could be used to do this, but I can’t see the additional code complexity to be worth it. Although it would decrease the database size, it would likely also increase the training time, as each of the images would need to be decoded before being shipped to the GPU. By writing uncompressed images to the DB, we can forgo that whole process. After all, I delete the databases after each iteration of algorithm development, so its not really a good time investment to shrink them due to their ephemeral nature.

Components of code available here and here

Note: The binary models in the github link above were generated using nvidia-caffe version .14 and aren’t future compatible to future versions (currently .15).

Hello,

When running step2_make_lmdb.m, I get the following error. Do you by any chance have an idea on how I may be able to address it?

Error using +

Matrix dimensions must agree.

Error in step2_make_lmdb (line 73)

mean_data= mean_data*(total_patches-local_num)/total_patches +

local_sum/total_patches; %compute the running sum

what is the size of local_sum versus mean_data? everything else in that equation should be a scalar value

local_sum is 64 * 64 and mean_data is 64*64*3

is it possible that the first image you extracted patches from was grayscale (ndim=1), and the second image was in RGB (ndim=3)? if you look at line 65 of the func_extrat…. total_sum=zeros(hwsize*2,hwsize*2,ndim_image); this variable becomes “local_sum” when it is returned, so its size is tightly coupled to the input image dimensions

That was it- thank you! I was using my own grayscale data set which explains the discrepancy.

Is there a reason not to use python instead of the matlab code you present?

Not at all, both are a means to an end. In my case, I already have a ton of matlab code to handle the specific files that we deal with, in particular large svs or big tiff files and their associated xml annotations. I have some pending code on how to create databases using python directly but haven’t gotten around to writing it up yet.

Hello.

I am getting the follow error when I run “make_output_image_reconstruct-with_interpolation-advanced_cmd.py”:

WARNING: Logging before InitGoogleLogging() is written to STDERR

W1106 15:38:35.569145 28185 _caffe.cpp:122] DEPRECATION WARNING – deprecated use of Python interface

W1106 15:38:35.569164 28185 _caffe.cpp:123] Use this instead (with the named “weights” parameter):

W1106 15:38:35.569169 28185 _caffe.cpp:125] Net(‘deploy_full.prototxt’, 1, weights=’full_convolutional_net.caffemodel’)

[libprotobuf ERROR google/protobuf/text_format.cc:245] Error parsing text-format caffe.NetParameter: 42:18: Message type “caffe.BatchNormParameter” has no field named “scale_filler”.

F1106 15:38:35.570405 28185 upgrade_proto.cpp:88] Check failed: ReadProtoFromTextFile(param_file, param) Failed to parse NetParameter file: deploy_full.prototxt

*** Check failure stack trace: ***

Aborted (core dumped)

Best regards!

Are you using the latest version of the Nvidia Caffe fork, which is slightly different than the BVLC version so that it works with Digits?

Yes, the problem was the Caffe version. Thank you!

Hello, thank you for sharing your code and great guidance. I am running in the last step (step5) using the command

step5_create_output_images_kfold.py ./BASE/ 2

in which ./BASE as your guidance. It contains a original image which need to segment. However, it was error as follows:

Traceback (most recent call last):

File “step5_create_output_images_kfold.py”, line 83, in

scipy.misc.imsave(newfname_class, outputimage) #first thing we do is save a file to let potential other workers know that this file is being worked on and it should be skipped

File “/usr/local/lib/python2.7/dist-packages/scipy/misc/pilutil.py”, line 197, in imsave

im.save(name)

File “/usr/lib/python2.7/dist-packages/PIL/Image.py”, line 1462, in save

fp = builtins.open(fp, “wb”)

IOError: [Errno 2] No such file or directory: ‘./BASE//images/2/12750_500_f00003_original_class.png’

As a command line parameter, you need to replace BASE with the appropriate subdirectory/type you’re working with. if you look at the command line help “parser.add_argument(‘base’,help=”Base Directory type {nuclei, epi, mitosis, etc}”)”.

Shoot, who would have thhgout that it was that easy?

Can you please elaborate on what the ‘base’ and ‘fold’ should be replaced with for lines 19 and 20? Whenever I run the script I get the following error:

usage: STEP_5_NOCOMMENT.py [-h] nuclei 2

STEP_5_NOCOMMENT.py: error: too few arguments

What should I do?

Not sure I understand, there is no step 5 in this approach?

Hi Andrew,

Thanks for making all of your code available, and for documenting it all. I’m looking forward to trying it on some of my H&E data.

I tried loading the Caffe model from the folder for this blog post (DL Tutorial Code – LMDB) in your git repo in python but am getting a shape mismatch error:

Cannot copy param 2 weights from layer ‘bn1a’; shape mismatch. Source param shape is 1 1 1 1 (1); target param shape is 1 16 1 1 (16).

The line I’m executing is:

net_full_conv = caffe.Net(“deploy_full.prototxt”, “full_convolutional_net.caffemodel”, caffe.TEST)

It’s the line from the python script in the same folder (make_output_image_reconstruct-with_interpolation-advanced_cmd.py), with the default arguments. Are those the correct model and prototxt files I should use, or might there be something else wrong?

Thanks!

The binary files were produced using version .14 of nvidia-caffe and we are discovering that they are not forward compatible with later versions (.15 is the current version). What version are you using?

That was indeed the problem. I was using version .15. I checked out the .14 branch of nvidia-caffe and built from it instead and was able to run the model successfully. Thanks!

Not a problem, glad to help 🙂

Ha I’m here. I noticed the matlab-lmdb is for UNIX I wonder if it will compile on windows?

I got lots of errors like:

C:\Users\ding\AppData\Local\Temp\mex_8670067939871759_6064\LMDB_.obj:LMDB_.cc:(.text+0xe39): undefined reference to `mdb_txn_abort’

I wonder if this is something to do with the cross-platform?

(I’m very new to other environment sorry about the newbie question!)

Thanks!

Ah, so with the lmdb wrapper code , I don’t know if it is windows compatible. You’ll have to see if you can get it to compile :-\

AHA, after so many days of trying out different things I have finally compiled lmdb wrapper on Windows. the solution is to mex LMDB_ with matlab mingw 4.9.2, then mex -setup and switch to visual studio and mex caffe.pd_

now I’m running step 2..surprisingly that I have to run it on my HHD as my SSD had 120GB left and I got the error saying there’s not enough space :S

😀 1T per data file

Hmmm interesting. Why not true using the same code on a linux machine to remove the doubt of there being any bugs in your code?

I did actually thought about, but even thinking about install everything (matlab,caffe,apps etc) gave me a headache…:D

sorry about more of my beginner’s questions, I’m looking at the python file and realised the full_convolutional_net.caffemodel is no longer compatible…I assume I need to train my own model, using e.g.

/c/Projects/caffe/build/tools/Release/caffe train –solver=adasolver.prototxt ?

Thanks a lot!

Probably. There have been some compatibility issues between versions and networks. I would recommend training your own network, but instead of using the command line to use Nvidia Digits (I have some things on my blog discussing it). Its *far* easier and nicer!

Thanks a lot for recommending Digits! I got super excited when browsing the website and I was like..OMGOMG A GUI for TRAINNING! Ohhh wait…it has limited function on windows and only works with python2 :O but I’ll try to install it first see if I can get it to work

Hi Andrew, thank you so much for all your help!

I’m nearly able to complete all the step on windows and currently trying to create the image segmentation model on digits.

Since the model I got from your github is no long compatible with the latest version of the caffe I wonder if you can suggest a way to make your layer file compatible?

basically the issue is with the

Bad network: Not a valid NetParameter: 42:5 : Message type “caffe.BatchNormParameter” has no field named

scale_filler/bias_filler/engine: CUDNN

thanks a lot! 😀

Are you using the nvidia version of caffe or the official branch? to use Digits (and some parts of the network you’re referring to), it needs to be from here. Otherwise i know that the official caffe git has batch normalization, but it needs to be specified differently

Thanks Andrew for the reply 😀

I deleted batchnormparameter for now and managed to go through the training. now I’m at the one final step where I got the error: “invalid shape for input data points” at “interp = scipy.interpolate.LinearNDInterpolator( (xx_all,yy_all), zinter_all) ”

I think it might have to do with the dimention, or maybe with the additional things i added earlier “displace_factor = round(args.displace)-1” if I used the original script without ‘-1’ I get blob not matching error.

Do you think this can also be a python2->3 issue?

Thanks a lot!

some more details:

if i add len(xx_all), i get

(2000,2000,3)

(2032,2032,3)

any advice? 😀

not really sure what you mean? are you running the code as is with the prescribed dataset? everything should fit together very nicely

Sorry, I don’t have any idea, i’ve never seen that error before :-\

no worries 😀

i realise the (2000,2000,3)(2032,2032,3) was actually from print(im.shape) not from len(xx_all)

could you suggest a better way to debug this?

thanks a lot!

Dear Ding

How did you complete all the step on windows ? could I give me email? I wanna consult some questions.

Hi Andrew, after spending sooooooo long I have finally able to run your implementation on windowssss!!!!im super excited now 😀

the last error was another rookie error.. i didn’t understanding the true meaning of deploy prototxt and was using the train_val prototxt..

Great job!

Hello! Would it be possible for you to email me the classifier for the lymphocyte detection code? I’ve been attempting to train my own but I’m having some difficulty understanding it all. I am a Drexel undergrad student studying electrical engineering looking into deep learning.

what exactly do you mean? both the model and the code should be available in github?

Thanks for your great model as well. I wanna know why in deploy, the size is the size of whole image? while in the training phase that is the size of patch?? How this line: ”net_full_conv.forward_all(data=np.asarray([transformer.preprocess(‘data’, im)]))” can predict all nuclei in the whole image? I do not understand your idea? But it was a great idea!!

the deploy file specifies what size the input will be resized to before feeding it into the network. so if you pass in a large image, with the original deploy file, it will resize it down to a single patch and you will only get a single output. by having a network based on convolutions it can in fact take an image of any size and be run through without an issue, producing an image of a slightly smaller size. try walking through the network mathematically hand and i think you’ll see it works without a problem. you can also check out FCNs, which are similar to this approach for additional reading

Dear Janowczyk,

I followed the tutorial to do nuclei segmentation. And in the last step, when I run “make_output_image_reconstruct-with_interpolation-advanced_multichan_cmd.py”, I got this error:

python ‘/home/sci/Desktop/public-master/DL_output_generation/make_output_image_reconstruct-with_interpolation-advanced_multichan_cmd.py’ [“-lconv3b”,”-c1″,”-p64″,”-d8″,”-bDB_train_1.binaryproto”,”-msnapshot_iter_600000.caffemodel”,”-ydeploy.prototxt”,”-o./out/”,”8867_500_f00018_original.tif”]

Traceback (most recent call last):

File “/home/sci/Desktop/public-master/DL_output_generation/make_output_image_reconstruct-with_interpolation-advanced_multichan_cmd.py”, line 161, in

net_full_conv = caffe.Net(args.deploy, args.model, mode)

RuntimeError: Could not open file deploy_full.prototxt

My caffe verison is caffe-nv 0.14.5, Digits version is 5.1-dev.

Could you give me some advice?

Thanks!

try using the entire set of files here (deploy, python, etc):

https://github.com/choosehappy/public/tree/master/DL%20Tutorial%20Code%20-%20LMDB/obsolete

also, thats a pretty intense set of command line parameters, you do understand that it would produce a set of files showing the activations at the conv3b level and not the final predicted classess, right?

Excuse I cant find colour_deconvolution.m function in the use case3?does it mean i need to write it on my own?

it is avaialble here: https://github.com/choosehappy/public/blob/master/Misc-Utils/colour_deconvolution.c

Hi choosehappy,

Is there a way I can communicate with you through e-mail? We’re encountering a list of issues.

If you’d like to contant me first, my e-mail is: ayman.tira@gmail.com

Our issues are mainly focused on step 5. I’d also like to ask about the possibility of running the cases through a deep learning cloud service.

you can find my contact information on my CV, available on the “about me” page 🙂

Hello,

It appears that the link to the updated prototxt is broken, it leads to a 404 error on Github. Has it been re uploaded somewhere else?

Thanks!

in fact, the latest set of the code doesn’t require the updated prototxt

it should accept the deploy.txt produced directly by digits, modify it, and reload it on the fly

Hi, great stuff! I’m interested in running this model myself. I’m having a hard time locating the model and its weight file. Could you please point me in the right direction?? Thanks in advance!

hmmm, have you checked in here: https://github.com/choosehappy/public/tree/master/DL%20Tutorial%20Code%20-%20LMDB hopefully everything is there